The Student Who Said No to AI

The debate over artificial intelligence in education is often framed as a question of academic integrity. It is also a question of energy, water, and what it means to know something.

A college student’s reluctance to use artificial intelligence might seem, at first glance, like ordinary technophobia—the reflexive resistance that greets most disruptive technologies. But her objections, raised in conversation, are more carefully considered than that framing suggests. They touch on two distinct concerns: one broadly ecological, the other deeply personal. Together, they illuminate tensions that institutions, industries, and individuals are only beginning to reckon with.

The Resource Question

The environmental costs of AI are not disputed, though they are frequently underappreciated. A single ChatGPT query consumes roughly ten times the electricity of a standard Google search—a differential that, multiplied across billions of interactions, produces consequences of industrial scale. Global data center electricity consumption is projected to rise sharply in coming years, with some estimates suggesting the increase would be equivalent to adding a country the size of Germany to the world’s power grid. In the United States alone, data centers may soon account for six percent of total electricity consumption—comparable to all household lighting and refrigeration combined.

Water tells a similar story. The familiar saying that it takes a bottle of water to complete an AI query captures something real: both data centers and the power plants that supply them require substantial water for cooling. Between 2022 and 2023, Google’s water consumption rose seventeen percent, from 23 billion liters to figures approaching the output of a small municipality; Microsoft’s rose twenty percent over the same period. Both companies attribute the increases substantially to AI infrastructure. Amazon, notably, does not disclose its water consumption figures.

This trajectory is not without precedent. The nineteenth century marked a decisive turn in humanity’s relationship with energy, when coal and steam began powering tools that human muscle once drove. Each successive wave of technology has generally consumed more resources upon introduction, before competitive pressures eventually drive efficiency gains. The pattern holds, though with an important caveat: aggregate consumption tends to keep rising even as per-unit costs fall. AI appears to follow this curve—only steeper.

The optimists point to fusion energy, where experimental fusion reactors in the United States and Europe have produced encouraging hopes. Fusion I sthe source of energy of the sun, and its promise if clean, renewable energy. Projects in Europe, UK, US, and China are underway, but none has proven it will work in a known quantifiable time. Microsoft has invested in at least one company promising commercial fusion power, so has Google. Whether that promise materializes in time to offset AI’s near-term resource demands is, at present, an open question.

The Personal Reckoning

The student’s second objection is harder to resolve with data. If she offloads intellectual work to an AI system, will she learn less? Will the result still be meaningfully hers?

These are not frivolous concerns, particularly in a university context where the act of doing and the process of learning are understood to be inseparable. The production of an academic paper involves not only its conclusions but the intellectual labor that generates them—the dead ends, the reconsidered arguments, the gradual mastery of a subject. When AI absorbs that labor, something is lost even if the output looks the same.

There is a specific limitation worth naming here. When AI conducts a literature review, it does not return an exhaustive catalog of relevant sources; it performs its own selection, according to processes it does not fully expose. What is absent is traceability—the methodological transparency that allows a researcher to evaluate how conclusions were reached and decide whether to trust them. In both the sciences and the humanities, methodology is not incidental to knowledge; it is constitutive of it. An AI that cannot show its work is, in an educational context, an unreliable collaborator.

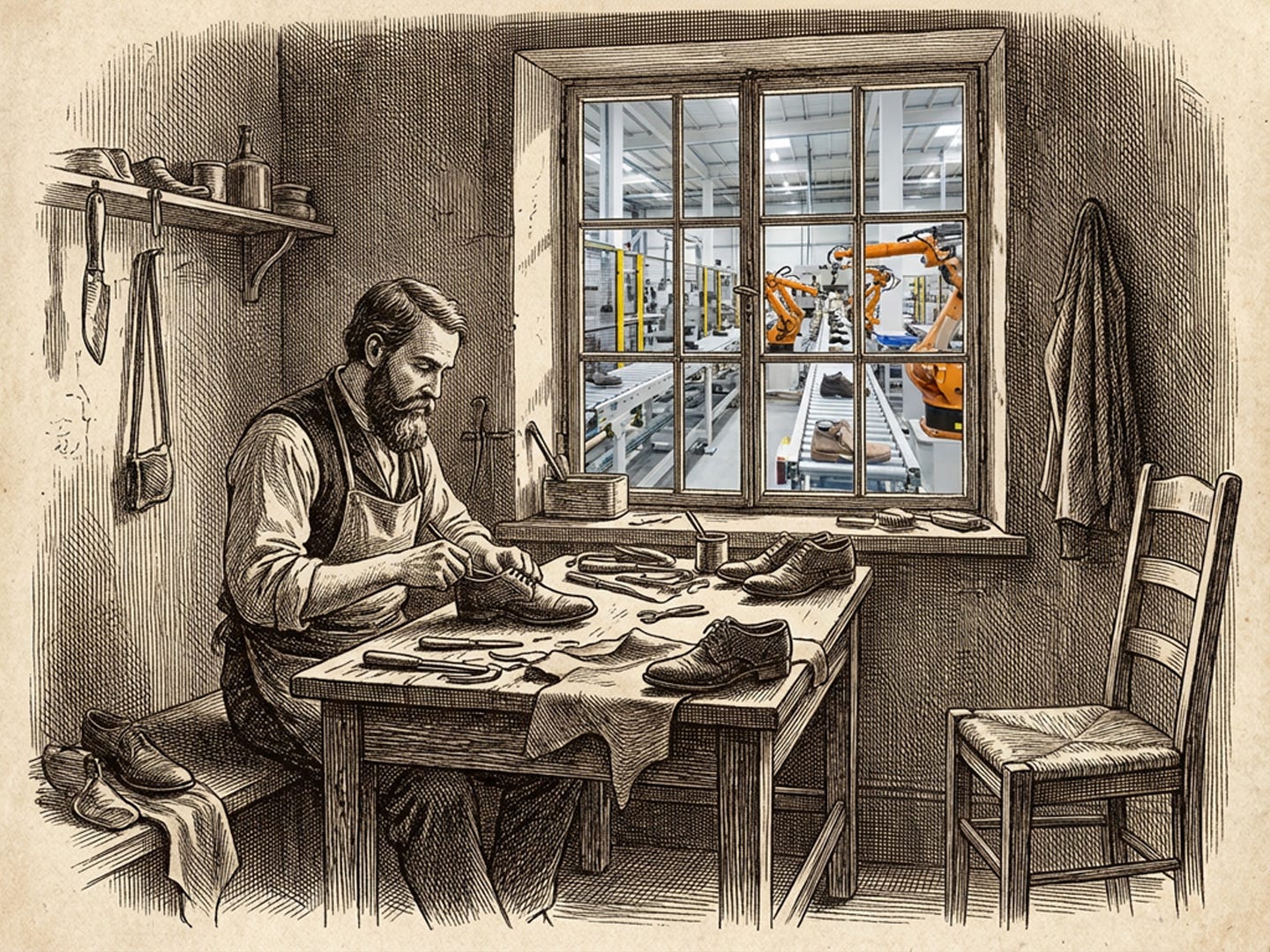

How much AI should be allowed in the production of a paper in School? This is not just the issue of how much learning has been demonstrated; it is also an issue of the pride we lose when our work is made by a machine. This discomfort has a name in philosophical literature: Promethean shame. The term describes the unease that arises when a human-made machine produces results that a human being could not readily match. Consider the artisan shoemaker at the dawn of industrialization—a craftsman who took pride in fitting shoes to individual customers, who understood leather and last and the particular demands of a given foot. He would have watched, with feelings not easily named, as machines began producing footwear by the million, in more colors, more sizes, and more quickly than he ever could. The Olympic movement encodes a version of this instinct: competitions exclude performance-enhancing technologies precisely to preserve a domain in which human beings contend only with each other.

Navigating the Distinction

What practical guidance follows from all this?

One useful framework distinguishes between AI as a crutch and AI as an amplifier. The crutch replaces a capacity the student has not yet developed; the amplifier extends a capacity the student already possesses. The distinction is not always obvious, but it is real.

The pocket calculator offers an instructive analogy. It is the wrong tool for a third-grader still learning multiplication tables—not because calculators are bad, but because the child needs to internalize arithmetic before technology can legitimately substitute for it. Once that foundation is established, the calculator frees the student to engage with more complex problems. The tool serves learning rather than circumventing it.

Entry-level computer science courses at Stanford and other institutions have long used a variation of this principle: “Karel the Robot,” a pen-and-paper exercise in which students give written instructions to an imaginary robot navigating a grid. The point is not that computers are unavailable. The point is that understanding must precede automation.

For college students—particularly those in later years, with developed critical faculties—the question of whether they are using AI as crutch or amplifier is one they are, in principle, equipped to answer honestly. The student who can identify which cognitive functions she is outsourcing, and evaluate whether she has already internalized them, is in a position to make a legitimate choice. The student who cannot make that distinction is probably not yet ready to delegate.

The shoemaker’s dilemma does not resolve neatly. There may come a point at which AI can perform certain intellectual tasks so thoroughly that human mastery of them becomes, economically speaking, unnecessary—as machine production rendered artisan shoemaking a niche rather than a trade. But intellectual craft is not entirely analogous to physical production. Shoes can be inspected; the outputs of AI reasoning often cannot be verified without the very understanding that AI threatens to displace. That asymmetry is a reason to continue developing the underlying capacities, even as the tools that might supplement them grow more capable.

The student who said no to AI may not be right in every application. But her instinct—that something important is at stake in the question of what we choose to do ourselves—is worth taking seriously.